Security‑Auditing AI Skills: Turning GenAI From Gimmick Into Guardrail

AI security doesn't have to mean annual pen tests and overloaded security teams. Luke Encrapera, Founder of AI and Sons, breaks down security-auditing AI skills—narrow, tool-first AI “guardians” that give you continuous assurance without slowing down delivery.

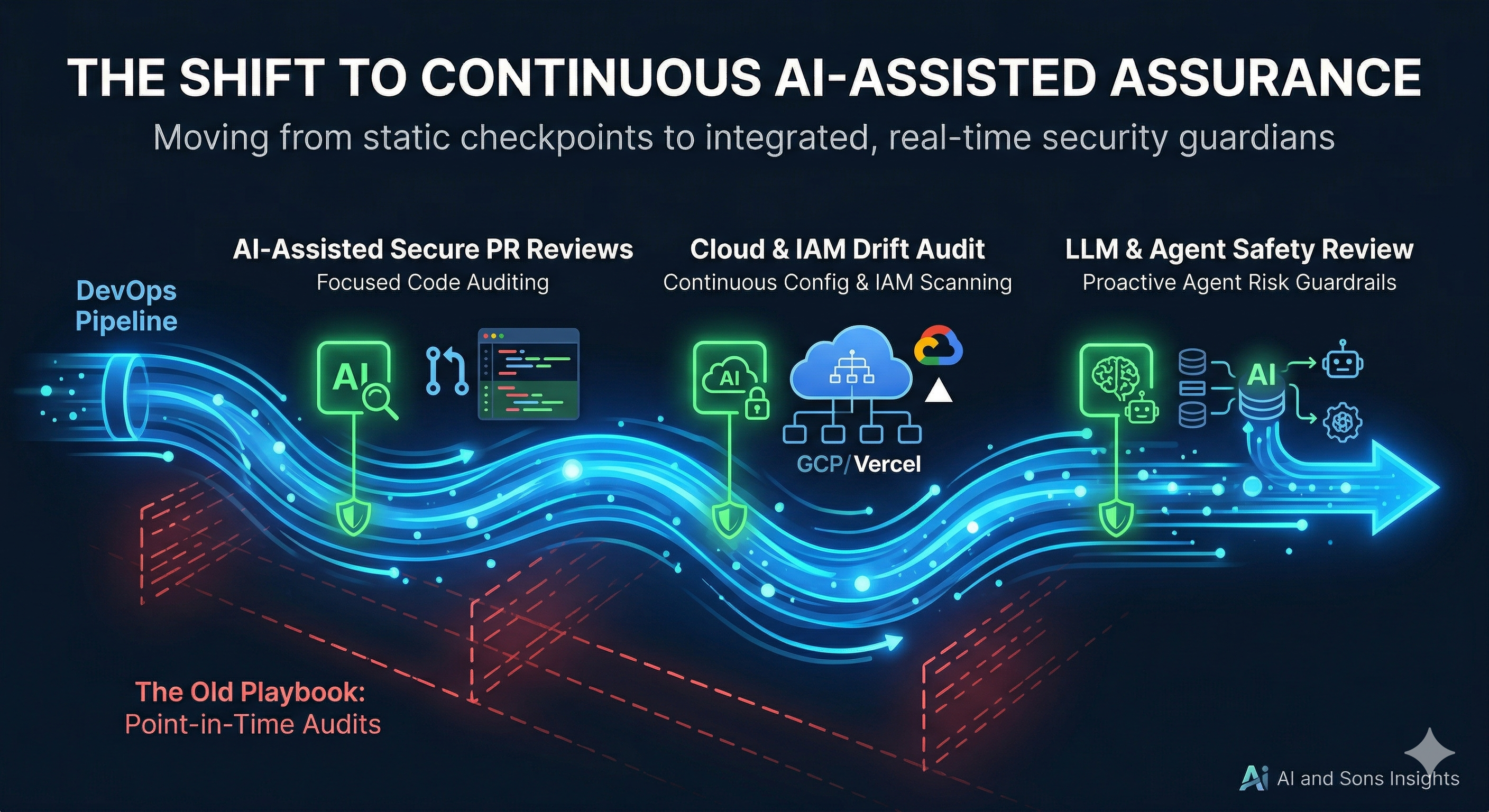

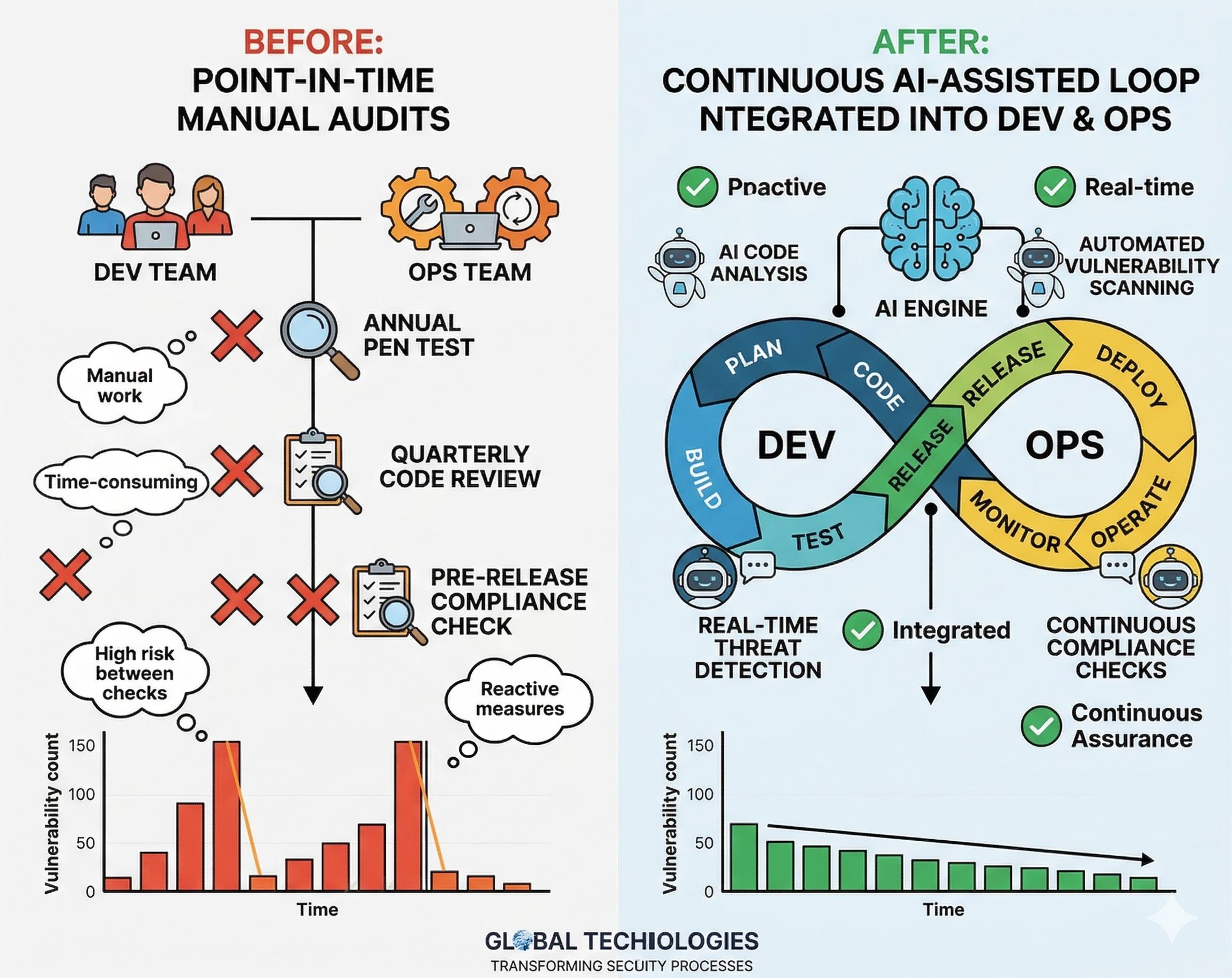

You're not crazy for feeling like "AI security" has become a wall of buzzwords. Underneath the noise, there's a very real shift happening: we're moving from point‑in‑time security checks to continuous, AI‑assisted assurance that runs alongside how we actually build and ship software.

This post is my take on that shift, and how we at AI and Sons approach it with what I call security‑auditing AI skills—small, focused AI “guardians” that keep an eye on your code, cloud, and AI agents without getting in your way.

It’s written for our Insights blog, but I’m also aiming it squarely at the LinkedIn crowd: founders, engineering leaders, and security folks who are trying to ship real stuff on modern stacks without waking up to an incident report.

Why the old security playbook doesn’t survive AI

The traditional security playbook looks something like this:

- Once a year, pay for a pen test.

- Once a quarter, run a bigger internal audit.

- In between, hope your scanners in CI catch anything too embarrassing.

That model was already shaky in a world of microservices and cloud. With AI and agents in the mix, it’s done.

Here’s why:

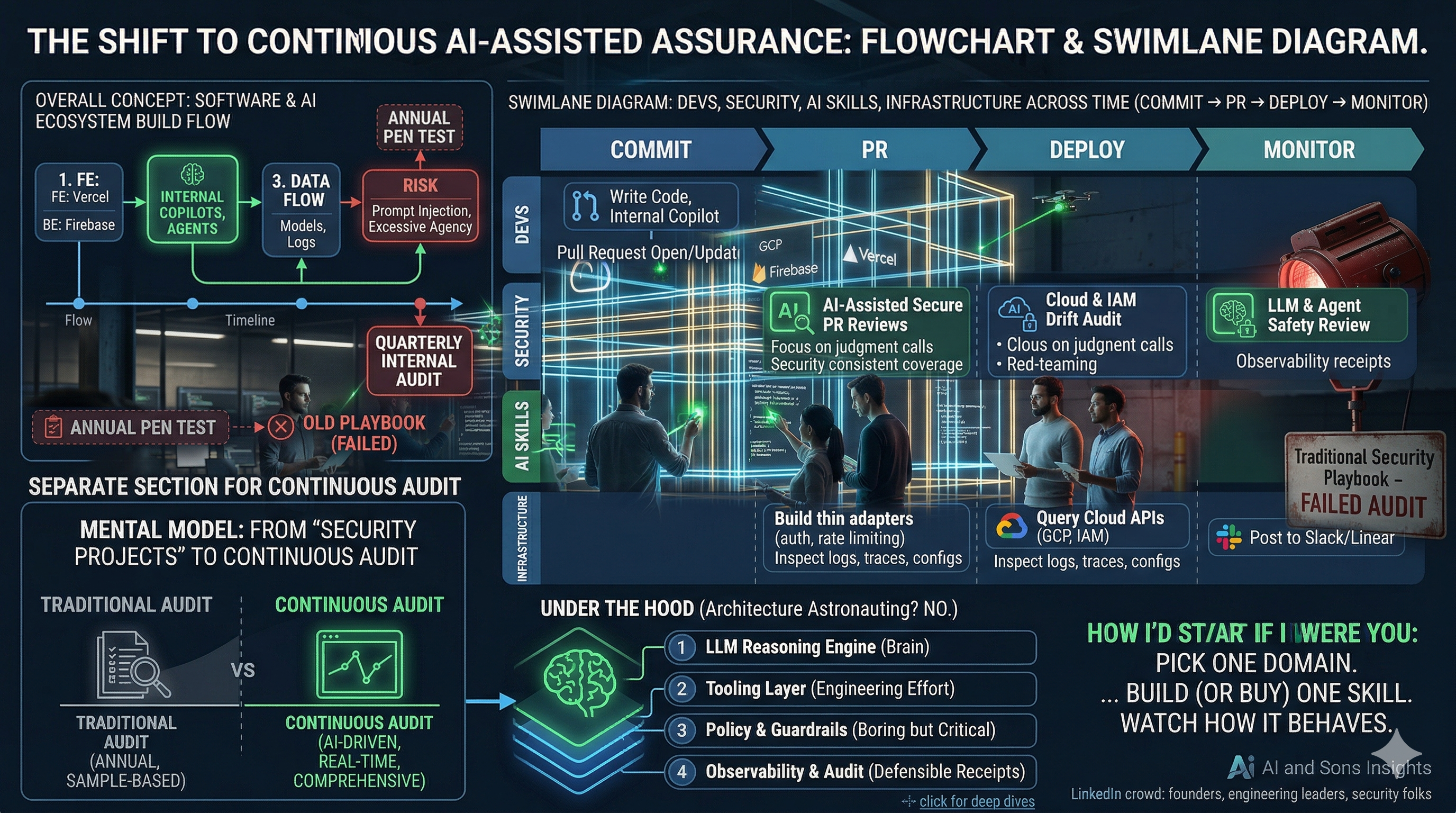

- Your system isn’t a single app anymore. It’s frontends on Vercel, backends on Firebase/GCP, mobile clients, background jobs, and a small constellation of SaaS tools your team hooked up “for productivity.”

- Your AI footprint is growing faster than your security team. LLM features, internal copilots, agents that can file tickets, touch data, maybe even change configs.

- The risk model is different. You’re dealing with prompt injection, insecure outputs, agents with too much autonomy, and data flowing through model prompts, embeddings, and logs.

If you try to manage that with one‑off audits and an overloaded security team, you end up with two predictable outcomes: the org slows down, or you ship a lot of risk and hope “it’ll probably be fine.” I don’t like either. So we do something else.

What I mean by “security‑auditing AI skills”

At AI and Sons, we use a specific pattern that’s been working well enough to deserve a name.

A security‑auditing AI skill is a small, narrowly scoped capability that uses:

- Deterministic tools for ground truth (SAST, SCA, secret scanners, cloud APIs, logs), and

- An LLM to interpret, correlate, and explain what those tools find,

to answer one question continuously, like:

- “Is this pull request about to make auth or data handling worse?”

- “Has any IAM policy or cloud config drifted into dangerous territory since yesterday?”

- “Are our LLM agents operating inside the risk box we think they are?”

The important bits:

- Narrow scope. One problem per skill. No “AI, secure everything.”

- Tool‑first. The AI orchestrates and explains; it doesn’t directly mutate production systems.

- Structured outputs. It returns machine‑readable findings (severity, what changed, mapping to OWASP/NIST, suggested remediation).

- Guardrails baked in. Permissions, logging, and LLM‑specific safety controls are part of the design, not last‑minute duct tape.

You can think of these as reusable security micro‑services that just happen to have a brain.

The mental model: from “security projects” to continuous assurance

A useful analogy is what’s been happening in the audit world. Auditors are going from annual, sample‑based reviews to continuous audit, where AI monitors controls and data streams all the time instead of checking a tiny slice once a year.

Security‑auditing skills are basically continuous audit for your software and AI systems:

- Instead of one big AppSec review after a major release, you get smaller, focused reviews on every PR and every meaningful infra change.

- Instead of one big “AI risk assessment” PowerPoint, you get ongoing checks on how your agents are actually behaving in the wild.

This is not about replacing humans; it’s about letting humans focus on judgment calls, not log‑grepping and Jira archaeology.

Under the hood (without too much architecture astronauting)

No matter which vendor slide you look at, the serious implementations all share the same four layers. Here’s how I frame it when we design this with clients.

1. LLM reasoning engine

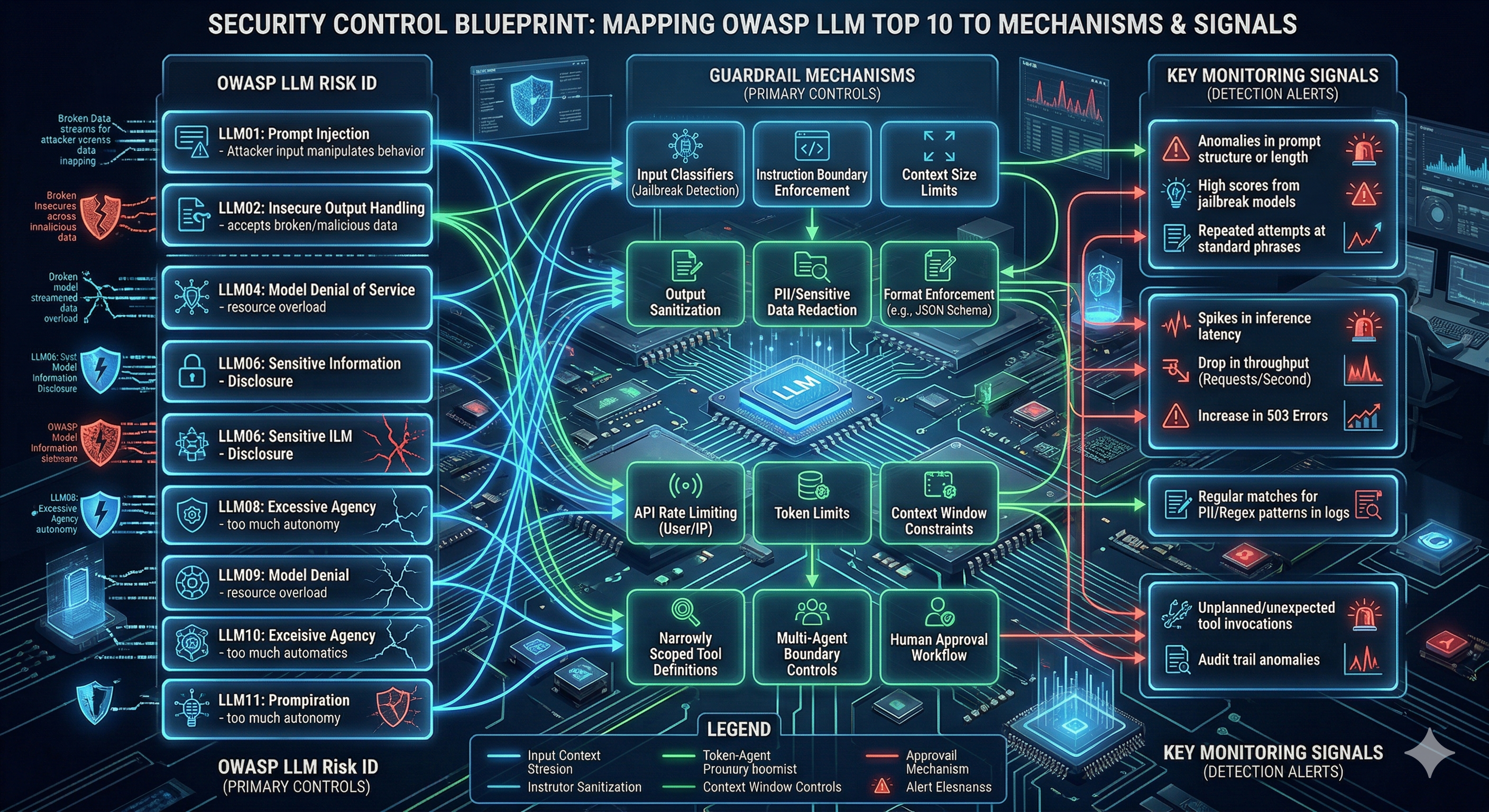

This is the AI brain. Its job is to read structured and semi‑structured inputs (diffs, configs, scanner results, IAM policies, agent logs), apply a policy or checklist (your standards, OWASP LLM Top 10, NIST AI guidance), and produce understandable, actionable analysis and suggested next steps.

Key constraint: it does not get raw, unfiltered access to everything. Data goes through a gate—redaction, scoping, sometimes aggregation—before it hits the model.

2. Tooling layer

This is where most of the engineering effort lives. You build thin, well‑typed adapters to run SAST/SCA/secret scans in CI, query your cloud and SaaS APIs (GCP, IAM, storage, functions, logs, etc.), inspect LLM/agent prompts, tools, and runtime traces, and post to Slack, create Linear issues, add PR comments, update dashboards.

Each adapter has its own auth, rate limiting, and “no really, this is read‑only” guardrails.

3. Policy and guardrails

This is where you bake in the boring but critical stuff: OWASP LLM Top 10 mitigation strategies (prompt injection defenses, output validation, protection against excessive agency), enterprise GenAI security patterns (input/output filtering, PII redaction, approval flows for high‑impact actions), and your own rules (“Prod access is read‑only for all skills,” “No agent is allowed to rotate credentials without a human in the loop”).

4. Observability and audit

If you’re going to let AI participate in security, you need receipts: full logs of prompts, tool calls, and outputs; metrics (alerts per week, time to triage, false positive rates, guardrail hits); and artifacts you can show an auditor or your board. This is what makes the whole thing defensible. Without it, you just have “vibes‑driven AI security.”

Three security‑auditing skills I’d install almost everywhere

There are lots of variations, but three patterns keep showing up and delivering value fast.

1. AI‑assisted secure PR reviews

The problem: Traditional SAST in CI spams devs with noisy findings. Most of it gets ignored. Real issues slip through.

The skill: Triggered on PR open/update, it runs targeted SAST/SCA/secret scans on the diff, feeds results + diff into an LLM with a security‑first prompt, and the model groups, deduplicates, and ranks issues, then leaves targeted PR comments with context and suggested fixes.

Why it works: Developers see fewer, better findings. Security gets consistent coverage without having to sit in every review. Over time, this becomes a kind of “muscle memory coach” for the team: people start internalizing the patterns.

2. Cloud & IAM drift audit

The problem: Permissions and configs drift. Someone adds “just one” wildcard permission to unblock a deploy, then forgets to come back and fix it.

The skill: Runs on a schedule (e.g., nightly) and on certain change events, uses cloud APIs to inventory IAM roles, service accounts, storage buckets, policies, etc., compares current state against baselines (CIS, NIST, your own least‑privilege definitions), and uses an LLM to group findings by service/owner, explain risk in plain language, and suggest concrete remediation.

Why it works: You chip away at risk daily instead of pretending “we’ll do IAM cleanup next quarter.” The AI handles the slog of reading and correlating JSON policies; humans handle priorities and tradeoffs.

3. LLM & agent safety review

The problem: It’s way too easy to ship an agent with more power than you intended—and you often don’t discover that until a red team or an incident.

The skill: Examines agent definitions (prompts, tools, scopes, data sources, and the environments they run in), evaluates them against OWASP LLM Top 10 and GenAI security guidance (prompt injection, insecure output handling, data leakage, excessive agency, denial‑of‑service vectors), and produces a pre‑launch report.

Why it works: You get a repeatable, tech‑aware review step instead of vague “AI is scary” conversations. Over time, this becomes a checklist and pipeline step that keeps your AI roadmap from outpacing your governance.

Guardrails are not optional

It’s worth calling this out explicitly: the industry consensus (and frankly, my personal line in the sand) is that AI without guardrails in security‑sensitive flows is irresponsible.

Some practical non‑negotiables we use:

- Least privilege identities for every skill. No shared “super agent” accounts. Ever.

- Input and output filtering tuned to LLM‑specific risks. Prompt injection detection, PII stripping, and validation for anything that might become executable or infrastructure‑touching.

- Auditability by design. Anything the skill sees or says can be traced and reproduced. If you can’t explain why a skill made a given recommendation, you’ve lost control.

- Progressive autonomy. Start in read‑only “advisor” mode. Let the skill earn trust with telemetry and red‑teaming before you allow it to participate in any changes, and even then, under tight constraints.

If a vendor or internal project is promising “fully autonomous AI security” without showing you how it handles these points, that’s a red flag.

How I’d start if I were you

If you’re reading this as an engineering or security leader and thinking, “Okay, but how do we actually do this?”—here’s the roadmap I’d recommend.

- Pick one domain. Code (PRs), Cloud/IAM, or LLM/agents. Don’t try to “secure everything with AI” in the first sprint.

- Build (or buy) one skill. Scope it tightly, wire it into existing workflows (CI, cloud jobs, change management, Slack/Linear), and instrument it aggressively.

- Watch how it behaves. Track useful vs. noisy findings, developer and security team reactions, and guardrail hits and weird edge cases.

- Iterate, then expand sideways. Once you’ve got a rhythm in one area, cloning the pattern to a second domain is much easier—you’re mostly reusing architecture and guardrails, not reinventing the wheel.

This is exactly the approach we take with clients at AI and Sons: start small but real, prove value, then scale.

Why I’m writing this (and what’s next)

We started AI and Sons because we could see the gap opening up: teams were adopting agents and GenAI faster than they were updating their security and governance models.

Security‑auditing AI skills are our answer to that gap: they fit how modern teams actually work, they lean on the tools you already own, and they give security continuous visibility without turning into a drag on delivery.

On the AI and Sons Insights blog I’ll be following this up with deeper dives, including:

- A teardown of a secure‑PR auditing skill (prompts, workflows, and examples).

- A practical checklist for LLM & agent safety reviews mapped directly to OWASP LLM Top 10.

- How to wire Slack and your ticketing system into this so findings feel like part of the flow, not a side channel.

If you’re on LinkedIn and this resonated, I’d love for you to share it internally and with anyone thinking about “what does responsible AI security actually look like in practice?”

And if you’re already experimenting here and want help getting from clever prototypes to production‑grade guardrails, that’s literally what we do all day.

Discussion

0Join the conversation

Sign in with your Google account to participate in the discussion, ask questions, and share your insights.