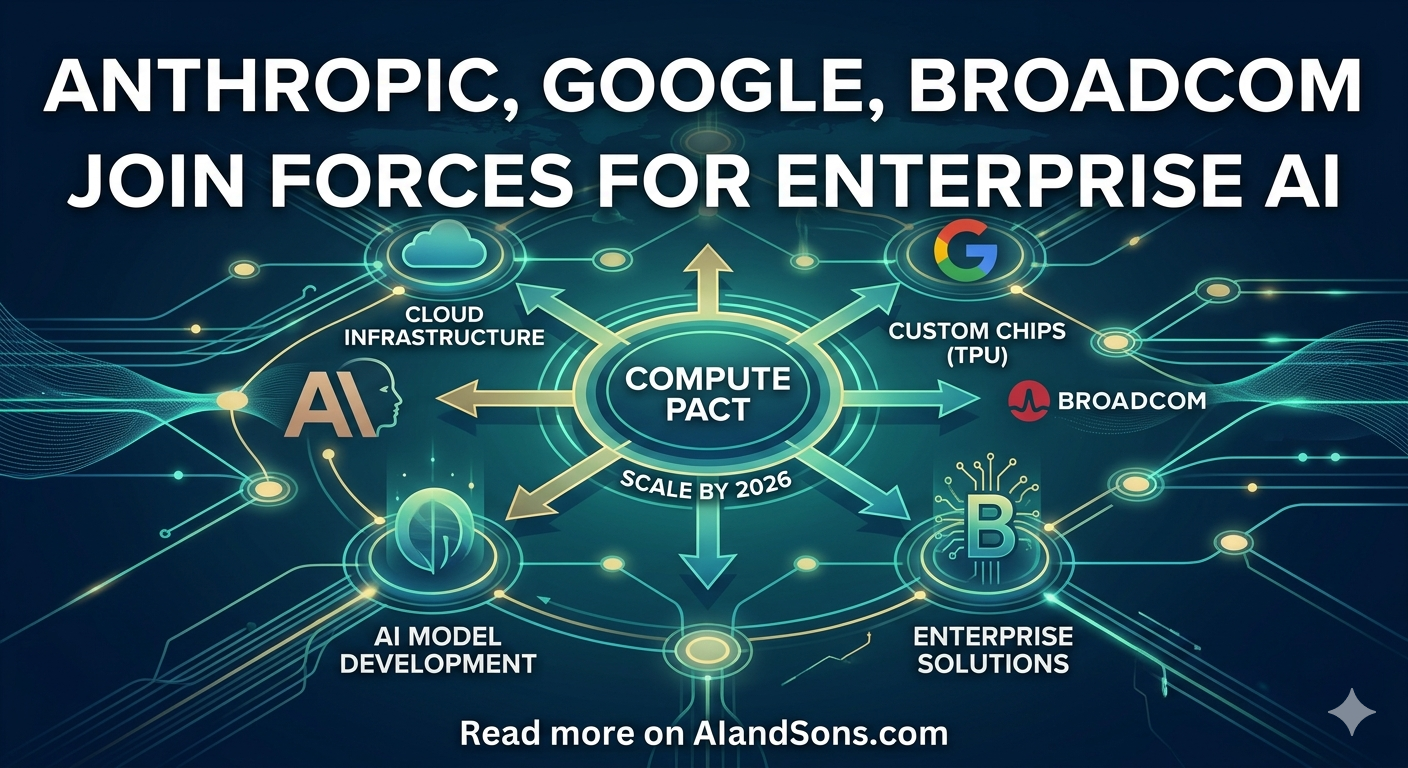

Anthropic’s Google-Broadcom Compute Pact Signals a New Enterprise AI Scale Phase

Anthropic’s new Google-Broadcom compute agreement reframes AI competition around capacity certainty, operational resilience, and enterprise-grade delivery speed.

What Changed On April 6, 2026

On April 6, Anthropic announced a new infrastructure agreement with Google and Broadcom that materially expands the amount of TPU-based compute it expects to access starting in 2027. The company framed this as its largest compute commitment to date and tied it directly to accelerating demand for Claude in enterprise settings. In the same announcement, Anthropic said run-rate revenue surpassed $30 billion and that the number of customers spending more than $1 million annually more than doubled in under two months, crossing 1,000 accounts.

That combination is the signal business leaders should focus on: this is not only a hardware procurement story. It is a demand-backed capacity strategy. When a frontier model provider publicly links utilization growth, customer concentration, and multi-year infrastructure commitments, it suggests that model quality alone is no longer the differentiator. Delivery reliability, predictable latency under heavy load, and global capacity planning are becoming product features in their own right.

Why The Broadcom 8-K Matters

Anthropic’s announcement became more concrete when Broadcom filed a Form 8-K the same day. In that filing, Broadcom disclosed two linked arrangements: a long-term agreement to develop and supply custom TPUs for future Google generations and a supply assurance agreement for networking and related components for Google’s next-generation AI racks through up to 2031. The filing also states that Anthropic, beginning in 2027, will access approximately 3.5 gigawatts through Broadcom as part of Anthropic’s broader multi-gigawatt commitment.

For executives, the important takeaway is the move from press-release language to regulatory language. SEC filings typically narrow ambiguity around timeline, counterparties, and material obligations. This filing does not spell out every commercial term, but it does anchor the partnership in a formal disclosure context and surfaces a key caveat: use of the expanded compute capacity is tied to Anthropic’s continued commercial success. In plain terms, demand performance still gates how much of this capacity is consumed.

The Strategic Pattern Behind The Headlines

This deal also clarifies something many teams have been sensing for the past year: frontier AI operations are shifting toward multi-platform infrastructure portfolios. Anthropic explicitly describes a mixed stack across Google TPUs, AWS Trainium, and NVIDIA GPUs, while keeping AWS as its primary training cloud partner. That is a practical architecture signal, not marketing filler. Multi-platform compute lets model providers route workloads by price-performance profile, reduce single-vendor concentration risk, and protect service continuity during supply or capacity shocks.

For enterprise adopters, this is relevant because provider-side infrastructure diversity often translates into better downstream service resilience. If your AI roadmap depends on a single provider for customer support automation, document intelligence, or internal copilots, continuity under peak traffic is as strategic as benchmark scores. The providers that can secure long-horizon compute, then operationalize it across cloud surfaces, are likely to win larger and stickier enterprise workloads.

Business Implications For Product And Platform Teams

First, capacity certainty increasingly affects roadmap confidence. Product teams can commit to more ambitious AI features when they trust throughput and latency won’t degrade during launch cycles or seasonal spikes. Second, procurement conversations will continue to evolve from per-token pricing only to blended discussions that include service-level guarantees, regional availability, and contractual escalation paths for sudden usage growth.

Third, model portability matters more than ever. As infrastructure partnerships deepen, enterprises should avoid hard-wiring critical workflows to single-model assumptions unless there is a clear reason. Workload abstraction, prompt/evaluation portability, and fallback orchestration are no longer “nice to have.” They are the controls that preserve negotiating leverage and reduce business risk when the market shifts quickly.

Fourth, geographic compute placement is becoming a governance topic. Anthropic said the vast majority of this new compute will be in the United States, extending its previously announced U.S. infrastructure commitment. For regulated industries and multinational organizations, compute location can affect data-handling posture, legal review cycles, and deployment sequencing for region-specific products.

Opportunities And Risks In The Next 12 Months

The opportunity side is straightforward: more committed capacity can support faster model iteration, stronger enterprise uptime, and potentially lower unit economics if utilization is managed well. Enterprises integrating AI deeply into core workflows stand to benefit from a more predictable service envelope, especially when deployments move beyond pilot traffic into production-scale operations.

The risk side is equally real. Long-term infrastructure commitments can create pressure to maintain rapid commercial growth, particularly when expansion terms include consumption conditions. There is also execution complexity in coordinating model evolution, silicon roadmaps, networking architecture, and customer-facing reliability expectations across multiple partners. Even with better capacity visibility, integration and operations discipline remain decisive.

A second risk is strategic over-interpretation by buyers. One large infrastructure announcement does not eliminate the need for internal controls around model governance, evaluation quality, and security boundaries. Enterprises should treat provider momentum as useful context, not a substitute for their own AI assurance program.

What Leaders Should Do Now

- Reassess provider concentration risk: map critical AI workflows to provider dependencies and identify failover options for each tier-1 use case.

- Upgrade AI SLAs and review language: include explicit expectations for latency, incident communication, and sustained peak-capacity behavior.

- Build model-portable evaluation suites: benchmark business outcomes across at least two model providers before major production rollouts.

- Track infrastructure disclosures as strategy inputs: include major partner announcements and regulatory filings in quarterly AI platform planning.

In short, Anthropic’s latest Google-Broadcom agreement is best understood as a market-structure event. It underscores that enterprise AI competition is converging on a three-part equation: model capability, operational reliability, and durable access to compute. Teams that plan for all three dimensions will be better positioned than teams that optimize for one.

Discussion

0Join the conversation

Sign in with your Google account to participate in the discussion, ask questions, and share your insights.